I Wrote About AI Ethics. Now It Is Time To Experience It

This article explores how the release of a new AI-focused film from the Center for Humane Technology reflects a broader shift from discussing AI ethics to experiencing its real-world impact on leadership and society.

In my last article, I argued that AI ethics is not about the machines, it is about us. Now, I am taking that conversation from theory to experience.

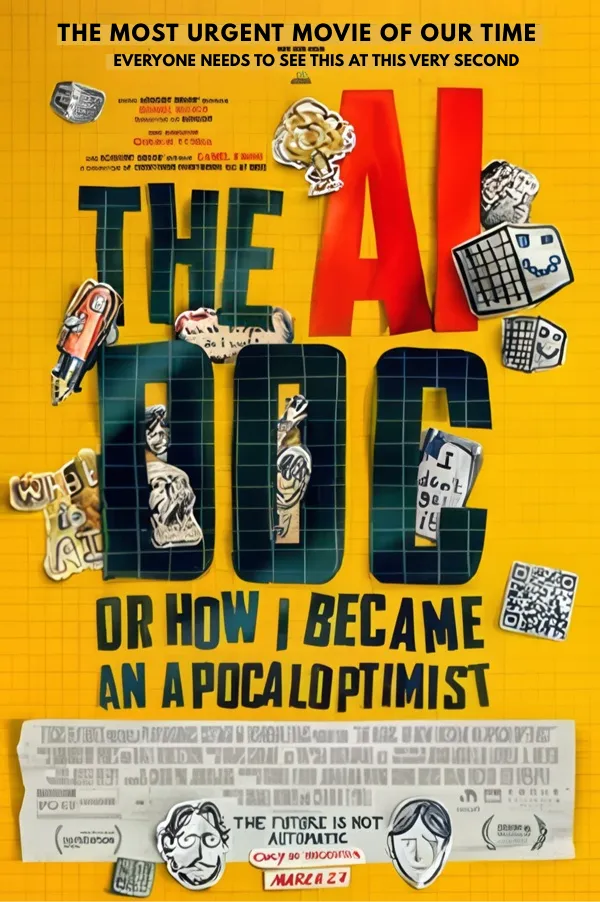

The new film from the Center for Humane Technology¹ has officially been released, and I plan to go see it, not as a spectator, but as someone who has spent four decades watching how technology reshapes behavior, leadership, and society.

This is not just another documentary moment.

It is a checkpoint.

From AI Ethics to Real-World Experience

Writing about ethics is one thing.

Experiencing how those ideas are translated, interpreted, and felt by a broader audience is something else entirely.

That is what makes this moment important.

For years, conversations around AI risk lived in:

Research papers

Closed-door discussions

Technical communities

Now they are entering mainstream awareness.

And when that happens, the conversation changes.

It becomes less about what is technically possible, and more about what is socially acceptable.

Less about capability, more about consequence.

Why This Film Matters Now

Timing matters more than most people realize.

This film is landing at a point where:

AI adoption is accelerating inside organizations

Leaders are making decisions faster than they can fully evaluate

Human systems are under strain, cognitively and culturally

This is where I see the real risk.

Not in the technology itself, but in the gap between capability and readiness².

That gap is where poor decisions get made.

It is where ethics becomes optional.

It is where leadership gets exposed.

What I Will Be Watching For

When I see this film, I am not looking for confirmation of what I already believe.

I am looking for signals.

How does it frame responsibility, individual or systemic?

Does it challenge behavior, or simply describe outcomes?

Does it create clarity, or amplify fear?

Most importantly, does it move people from awareness to action?

Because awareness alone does not change outcomes.

Behavior does.

The Leadership Lens Most People Miss

After decades of observing technology adoption cycles, one pattern is consistent.

Technology does not fail organizations.

Leadership readiness does.

That is the lens I will bring into this experience.

This perspective is informed by decades of work at the intersection of AI strategy, neuroscience, and leadership.

It is also what led me to write Brain Science For The Soul³.

Because the real question is not whether we can build powerful systems.

We already have.

The question is whether we have the cognitive and emotional capacity to use them wisely, especially under pressure.

This Is Not the End of the Conversation

This article is not a review.

It is a marker.

A transition point between what we say we believe about AI ethics and how we respond when those ideas are reflected back to us in a shared, public experience.

I will follow up after seeing the film with a deeper perspective.

Not just on what the film presents, but on what it reveals about us.

I will be seeing this film myself and sharing a deeper perspective on what it reveals about leadership in the AI era. The film released today, 3-27, and is listed in major Theaters and on Apple TV.

I want to hear back from you when you see it. Let's get the conversation going. Because ultimately, that is where the real story is.

FAQ

What is the Center for Humane Technology and why is it relevant to AI?

The Center for Humane Technology is a research and advocacy organization focused on aligning technology with human well-being. Its work highlights the societal and behavioral impacts of AI systems.

Why is leadership important in AI adoption?

AI adoption is not only a technical challenge. It requires leaders to make high-stakes decisions under uncertainty, manage cognitive load, and ensure ethical use of intelligent systems.

What is the biggest risk in AI adoption today?

The biggest risk is the gap between technological capability and human readiness. When systems scale faster than judgment, poor decisions and unintended consequences increase.

How does AI impact human behavior?

AI systems influence attention, decision-making, and cognitive patterns. Without awareness and intentional design, they can amplify bias, stress, and misaligned incentives.

References

Center for Humane Technology.

The AI Doc, or How I Became an Apocaloptimist.

https://centerforhumanetechnology.substack.comBrain Science For The Soul. Adriana Vela https://author.adrianavela.expert/brain-science-for-the-soul

Adriana Vela.

AI Ethics Is Not About the Machines, It Is About Us. https://markettecnexus.com/post/ai-ethics-is-not-about-the-machines-it-is-about-us